The Ultimate Claude Code Guide to ship like Anthropic engineers

AI will write 90% of code by the end of 2026, and only 10% of developers will stay relevant.

The engineers in that 10% aren't smarter. They just know how to use AI.

We put together the exact playbook Anthropic engineers use to make sure you're in the top 10%.

Sign up for The Code and get access to:

The Ultimate Claude Code Guide 2026 — 50+ tips and tricks to code 5x faster

The Code newsletter — learn the latest AI tools, tips, and skills to code faster with AI in 5 minutes a day

Hey {{first name | there}},

I’ve been seeing a pattern in how engineers are shipping agents right now.

They spend serious time on the interesting parts: model selection, prompt architecture, and tool definitions. Then they wire the whole thing up to their databases, internal APIs, or file system, and they ship it.

What they don't spend time on is the question of what they've actually handed over.

Housekeeping:

To make sure you don’t miss future emails, here are two quick GIFs showing how to move this email to your Primary tab and add this address to your contacts.

MCP, the Model Context Protocol, has quickly become a standard way to connect agents to tools and data sources. Servers are already being published and wired into production workflows, often with little attention paid to permissions or access control. Some engineers assume that if a tool call works, the setup is secure. In practice, that assumption breaks down very quickly.

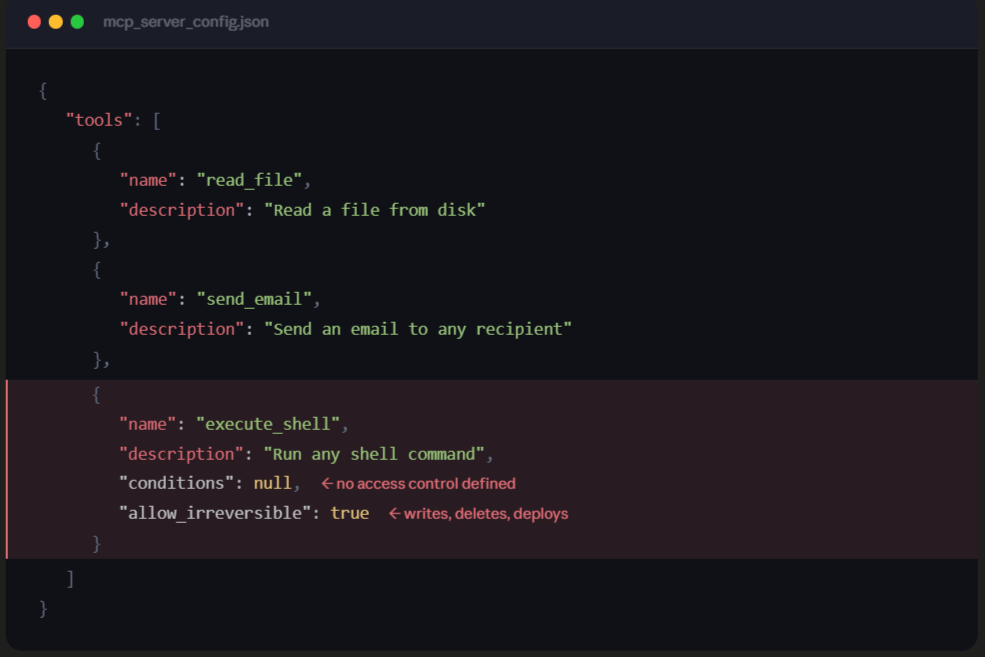

When you register a tool in an MCP server, you're defining what your agent can invoke. But you're usually not defining under what conditions, for whom, with what data, or with what side effects. The agent sees a tool, the tool is callable, and so it gets called. Very little stands between tool availability and execution.

Image Source: EverythingDevops

The attack surface here differs from that of traditional API security models. Most APIs assume requests are coming from a person or a trusted service. You authenticate the request, validate inputs, and apply rate limits. Agent-based systems break that assumption. An agent can consume malicious content from a document, website, or email and then execute actions through connected tools with legitimate access.

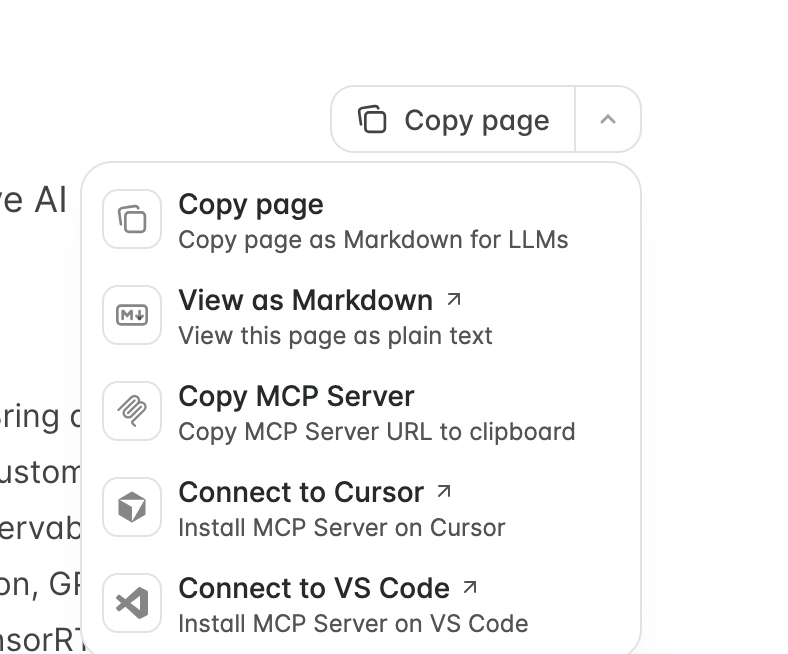

Recently, I found that some pages of documentation have specific feedback forms that agents are instructed to send requests to, should they encounter any errors when working.

This is sometimes fine, but if you clicked copy page as markdown without inspecting the content when pasting into an LLM, your agent could be sending info to a server without your knowledge.

Again, this is fine for simple stuff, but what if the entity you are copying markdown from is malicious?

Building with agents requires treating authorization as part of your infrastructure design, not as an afterthought. Before wiring an agent into production systems, there are a few questions worth answering:

What is the minimum set of tools the agent actually needs to do its job?

Which actions are irreversible? Tools that write, delete, send, or deploy should be treated differently from tools that only read data.

These considerations at the moment make it very hard for me to fully utilize them in production, as I can only really trust myself with certain tasks, and I do not like unexplained behaviour.

In a previous issue, I discussed sandboxes and computers for agents, which seems like a moderate solution for containing how much agents have access to, but this introduces some level of friction, which I haven't found a good middle ground for.

If you’ve found a good approach or are dealing with the same tradeoffs, feel free to reply to this email. I’d genuinely love to hear how you’re handling it.

Divine also asked me to pass along that if this was useful, sharing this link with a colleague who'd find it valuable would mean a lot.

Until next time.

Jubril Oyentunji

Chief Technology Officer, EverythingDevOps